Global payments

Connect

Add-ons

More

Skip to content![]()

![]()

![Forrester Consulting: Rethink Your Payment Strategy To Save Your Customers And Bottom Line]()

![The 2027 inheritance tax changes matter more for accountants]()

![A strategic blueprint for commercial VRPs]()

![A better dashboard and a better experience: Product updates - Autumn 2024]()

![blog-icon-tag-technology@2x]()

![blog-icon-tag-gocardless@2x]()

![blog-icon-tag-accounting@2x]()

![blog-icon-tag-enterprise@2x]()

![blog-icon-tag-global@2x]()

![blog-icon-tag-invoicing@2x]()

![blog-icon-tag-directdebit@2x]()

![[Report] Global payment preferences for recurring B2B purchases]()

![The little churn book: Advice from SaaS business leaders and investors]()

![SCA Impact Playbook: subscription commerce and the SCA opportunity]()

![Direct Debit]()

![Cash Flow Academy]()

![]()

![]()

Here's your change

All the news and resources you need to take the pain out of payments for your business.

Forrester Consulting: Rethink Your Payment Strategy To Save Your Customers And Bottom Line

Discover more about the state of recurring payments across the globe in this exclusive report.

PDFEnterprise

Latest articles

View all

The 2027 inheritance tax changes matter more for accountants

Upcoming pension reforms carry a greater impact for most accountants.

4 min readSmall Business

A strategic blueprint for commercial VRPs

Get the first-mover advantage with a blueprint to rollout commercial VRPs

1 min read

Featured articles

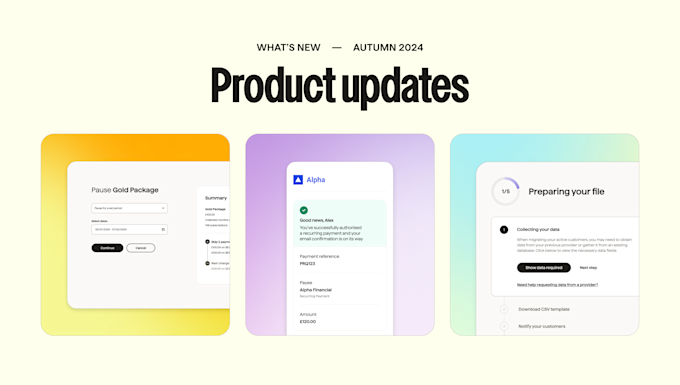

A better dashboard and a better experience: Product updates - Autumn 2024

See what improvements we’ve made this autumn

2 min readOpen Banking

Browse by category

Insight into how the GoCardless Engineering team is improving recurring payments globally.

The latest updates from GoCardless, with information on our newest products and events we’re attending.

Practical payment advice for accounting and advisory firms to help them empower their clients.

Recurring payments and Bank Debit expertise tailored to the world’s biggest subscription and invoicing businesses.

Expanding your business internationally? Here's everything you need to know about payments.

Everything you need to know about sending and receiving invoices, and improving your processes.

Read how Direct Debit is an easy, secure & convenient way to automate payment collection.

Popular downloads

![[Report] Global payment preferences for recurring B2B purchases](https://images.ctfassets.net/40w0m41bmydz/4fg6rX8ZuFVOU78XgCwo2k/70243c578b7edeb8083c457101198e8e/Card_Carousel-Chargebee-G2-s_CEO_Consult_Webinar.jpg?w=680&h=410&fl=progressive&q=50&fm=jpg)

[Report] Global payment preferences for recurring B2B purchases

We surveyed 4,990 businesses across 9 markets to determine which payment methods businesses prefer for different use cases.

PDFPayments

The little churn book: Advice from SaaS business leaders and investors

We've collected together advice on churn from some of the world’s most successful and outspoken investors and SaaS C-suite executives.

PDFRetention

SCA Impact Playbook: subscription commerce and the SCA opportunity

Your comprehensive resource for understanding the challenges and opportunities that Strong Customer Authentication (SCA) presents for global subscription businesses.

PDFPayments

Popular guides

Reference guides

Direct Debit: a beginner's guide

Everything you wanted to know about Direct Debit.

Invoicing: A complete guide

A complete guide to invoicing and invoices.

Alternatives to cards across Europe

A guide to major local payment methods in Europe.

Online Payments

Online payments for businesses: a complete guide.

Strong Customer Authentication (SCA)

Everything businesses need to know about SCA.

How to get your customers to pay by Direct Debit

How to get your customers to pay by Direct Debit.

BECS Direct Debit

A detailed guide to Direct Debit in Australia.

BECS Direct Debit New Zealand

A user guide for Direct Debit in New Zealand.

ACH: A guide to bank debit in the US

How to take ACH payments from customers in the US.

Pre-Authorized Debits

The complete guide to Direct Debit in Canada.

Direct Debit RFP Guide

Find out how to create an effective RFP.

Accountant’s Toolkit

Online Direct Debit resources for accountants