Hutch: Inter-Service Communication with RabbitMQ

Last editedJun 2024

Today we're open-sourcing Hutch, a tool we built internally, which has become a crucial part of our infrastructure. So what is Hutch? Hutch is a Ruby library for enabling asynchronous inter-service communication in a service-oriented architecture, using RabbitMQ. First, I'll cover the motivation behind Hutch by outlining some issues we were facing. Next, I'll explain how we used a message queue (RabbitMQ) to solved these issues. Finally, I'll go over what Hutch itself provides.

GoCardless's Architecture Evolution

GoCardless has evolved from a single, overweight Rails application to a suite of services, each with a distinct set of responsibilities. We have a service that takes care of user authentication, another that encapsulates the logic behind Direct Debit payments, another that serves our public API. So, how do these services talk to each other?

The go-to route for getting services communicating is HTTP. We're a web-focussed engineering team, used to building HTTP APIs, and debating the virtues of RESTfulness. So this is where we started. Each service exposed an HTTP API, which would be used via a corresponding client library from the dependent services. However, we soon encountered some issues:

-

App server availability. There are several situations that cause inter-service communication to spike dramatically. We frequently receive and process information in bulk. For instance, the payment failure notifications we receive from the banks are processed once per day in a large batch. If another service needs to be made aware of these failures, an HTTP request would be sent to each service for each failure. This places our app servers under a significant amount of load. This issue could be mitigated by implementing special "bulk" endpoints, queuing requests as they arrive, or imposing rate limits, but not without the cost of additional complexity.

-

Client speed. Often when we're sending a message from one service to another, we don't need a response immediately (or sometimes, ever). If a response isn't required, why are we waiting around for the server to finish processing the message? This situation is particularly detrimental if the communication occurs during an end-user's request-response cycle.

-

Failure handling. When HTTP requests fail, they generally need to be retried. Implementing this retry logic properly can be tricky, and can easily cause further issues (e.g. thundering herds).

-

Service coupling. Using HTTP for inter-service communication means that mappings between events and dependent services are required. For example: when a payment fails, services a, b, and c, need to know, when a payment succeeds, services b, c, and d need to know, etc, etc. These dependency graphs become increasingly unwieldily as the system grows.

It quickly became evident that most of these issues would be solved by using a message queue for communication between services. After evaluating a number of options, we settled on RabbitMQ. It's a stable piece of software that has been battle-tested at large organisations around the world, and has some useful features not found in other message brokers, which we can use to our advantage.

How we use RabbitMQ

Note: the remainder of this post assumes familiarity with RabbitMQ. I put together a brief summary of the basics, which may help as a refresher. Alternatively, the official tutorials are excellent.

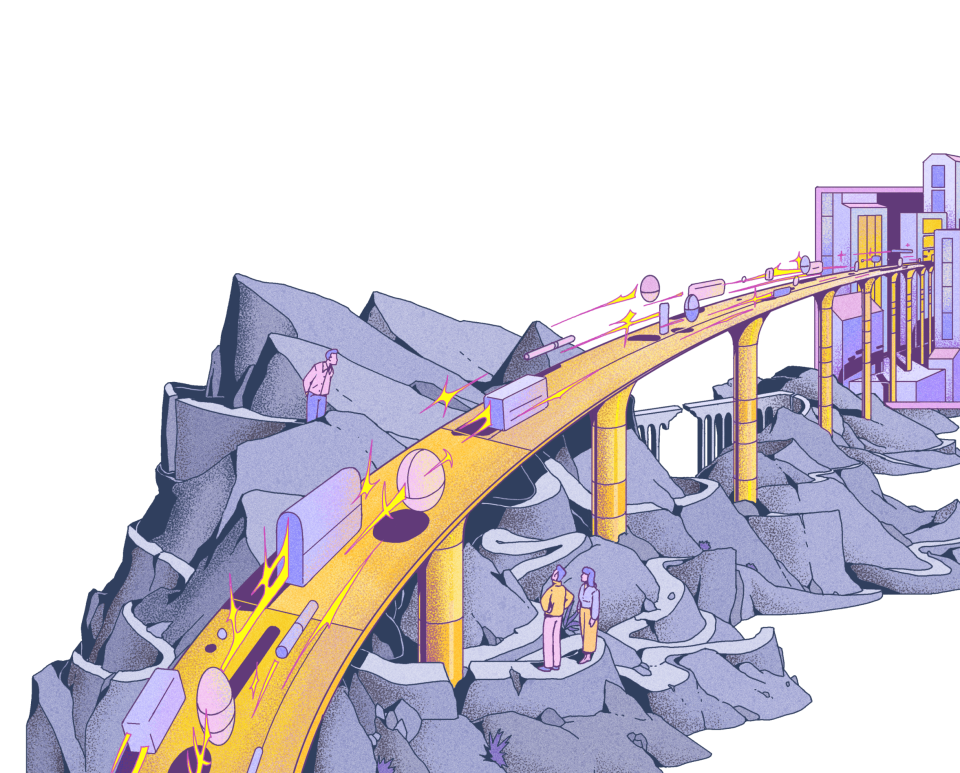

We run a single RabbitMQ cluster that sits between all of our services, acting

as a central communications hub. Inter-service communication happens through a

single topic exchange. All messages are assigned routing keys, which typically

specify the originating service, the subject (noun) of the message, and an

action (e.g. paysvc.mandate.transfer).

Each service in our infrastructure has a set of consumers, which handle messages of a particular type. A consumer is defined by a function, which processes messages as they arrive, and a binding key that indicates which messages the consumer is interested in. For each consumer, we create a queue, which is bound to the central exchange using the consumer binding key.

RabbitMQ messages carry a binary payload: no serialisation format is enforced. We settled on JSON for serialising our messages, as JSON libraries are widely available in all major languages.

This setup provides us with a flexible way of managing communication between services. Whenever an action takes place that may interest another service, a message is sent to the central exchange. Any number of services may have consumers set up, ready to receive the message.

Hutch

There are several mature Ruby libraries for interfacing with RabbitMQ, however, they're relatively low-level libraries providing access to the full suite of RabbitMQ's functionality. We use RabbitMQ in a specific, opinionated fashion, which resulted in a lot of repeated boilerplate code. So we set about building our conventions into a library that we could share between all of our services. We called it Hutch. Here's a high level summary of what it provides:

- A simple way to define consumers (queues are automatically created and bound to the exchange with the appropriate binding keys)

- An executable and CLI for running consumers (akin to

rake resque:work) - Automatic setup of the central exchange

- Sensible out-of-the-box configuration (e.g. durable messages, persistent queues, message acknowledgements)

- Management of queue subscriptions

- Rails integration

- Configurable exception handling

Here's a brief example demonstrating how consumers are defined and how messages are published:

# Producer in the payments service

Hutch.publish('paysvc.payment.chargedback', payment_id: payment.id)

# Consumer in the notifications service

class ChargebackNotificationConsumer

include Hutch::Consumer

consume 'paysvc.payment.chargedback'

def process(message)

PaymentMailer.chargeback_email(message[:payment_id]).deliver

end

endAt it's core, Hutch is simply a Ruby implementation of a set of conventions and opinions for using RabbitMQ: subscriber acks, durable messages, topic exchanges, JSON-encoded messages, UUID message ids, etc. These conventions could easily be ported to another language, enabling this same kind of communication in an environment composed of services written in many programming languages.

Today, we're making Hutch open source. It's available here on GitHub, and any contributions or suggestions are very welcome. For questions and comments, discuss on Hacker News or tweet me at @harrymarr.